Introduction

In order to understand how to ‘work the system’, you have to understand how the system works.

A few years back, Demis Hassabis showed the progression of his Artificial Intelligence agent ‘Deepmind’ as it learned to play video games, including Atari Breakout.

You can watch for yourself here…

There is one comment that was made that I return to time and time again.

Hassabis says, the agent (Ai program) “ruthlessly exploits the weakness in the system it has found”. As you’ll see in the video, with the Ai’s goal to win, it discovers something inherently faulty within the system and exploits it.

(For comedy value, I like to think there is a ‘moment’ when then program pauses, realises what it has discovered and ‘thinks’ to itself that this is a better way to go - watch the video again and you’ll see what I mean.)

What is the ‘Goal’ of your system?

This idea of ‘Goal-directed systems’ is central to Cybernetics as “the study of goal-directed, self-regulating systems” (source). And it is inevitable that you have business goals relating to growth, and intentions in regard to revenue.

The challenge you may face though is this: how connected are people’s day to day activities to these goals?

This is not whether people are ‘aligned to the company’s culture and vision’ (as important as that may be), this is much more simple than that: does doing ‘X’, which gets ‘Y,’ get them closer to Z (the goal)?

From a non systems perspective (if such a thing could truly exist) one may ask, “what needs to change in their attitudes and behaviours in order for them to better move toward ‘Z’?”

The reality is that, with a Digital Cybernetic mindset, ‘Z’ becomes the integral purpose of the system. And if doing ‘X’ doesn’t move toward ‘Z’, then the role and function of the person performing ‘X’ doesn’t enhance the likelihood of the system performing as it is intended i.e. to achieve ‘Z’. In which case, from this perspective, how does paying that person help achieve the overarching Goal?

“The purpose of a system is what it does. There is after all, no point in claiming that the purpose of a system is to do what it constantly fails to do.”

Stafford Beer

The role of learning with an ‘Organization’ (or Organisation)

The word ‘organisation’ comes from the Greek word ‘organon’, meaning tool or instrument. So an Organisation is ‘something’ that has a purpose - i.e. this purpose is inherent within the system.

The purpose of your business is to achieve ‘a score’ - you just have to decide “what?”

To organise around this purpose becomes easier, and your target should become clearer.

The question then becomes - is there a ‘learning loop’ built into the ‘processing of the people’ within the organisation? Without feedback, there is no learning - and without learning, you are left only with luck.

Repeatable actions delivering predictable results, time and time again, will prove the model that you are running (as a business) - i.e. we do enough X, and get enough Y as results, this will get us Z within ‘Q’ space of time.

Think about it using a very simple example:

Scenario A - no email tracking software

You send an email to a prospect.

They don’t reply.

What do you do?

Scenario B - email tracking software

You send an email to a prospect.

They don’t reply.

But the tracking software indicates they’ve opened the email and ‘clicked the link’.

What do you do?

Without giving a prescribed answer to this, the reality is that the feedback you’ve received post sending the email i.e. that they’ve a) open it, and b) clicked a link, should increase the available options you have.

Not using email tracking software yet? It’s free. Opt in and I’ll send you the information.

Note: asking yourself in any situation, “what is the outcome you are seeking to achieve?” should help you focus on the available steps and stages that will lead you toward the overarching system goal.

The nature of technological system used in the Scenarios above will determine whether a person has the feedback available (opens/clicks), but whether the person who is operating that element of the system can respond appropriately (i.e. in a way likely to move them toward their contribution toward ‘the goal’) will in part be down to behavioural flexibility.

This leads us nicely into the concept of Requisite Variety.

Requisite variety

1950’s cyberneticist, W. Ross Ashbury formulate the premise that’s become known as Ashby’s Law of Requisite Variety, which states “for a system to be stable, the number of states that its control mechanism is capable of attaining (its variety) must be greater than or equal to the number of states in the system being controlled.”

As Naughton continues, “In colloquial terms Ashby’s Law has come to be understood as a simple proposition: if a system is to be able to deal successfully with the diversity of challenges that its environment produces, then it needs to have a repertoire of responses which is (at least) as nuanced as the problems thrown up by the environment.”

In terms of the concept of ‘stability’ for a growing business, think of it this way: if you cannot read the feedback from the environment as to the responses of the actions taken, then you are blind to the reality in which you are operating.

Check out 2.41 mins onward for an application, from Stafford Beer.

“....another very simple example, and far more serious, is how do finance ministers try and run the economy. You know, think about it for a minute. They try to control the money supply, if they belong to Milton Friedman. They try and work through controlling interest rates, and that's about it. Do you seriously think that has requisite variety? So how does Ashbey's law come out in that case? It comes out: whatever provisions you make in a reductionist sense in the Finance Act, for taxation purposes, you will find that the business public, with its lawyers and its accountants and so forth, has much more variety that you can put into the law.”

Why you need to think more like a machine

Take a look at this video of Virtual Cars learning to navigate a course, and eventually reach their goal - an end point:

And it is the relentless exploration, with feedback and ‘learning’ built into the system, that allows the goal to be achieved.

Taking this further, and I’m sure I’ll get corrected if I’m mixing up neural nets and deep learning as it’s not my territory, but take a look at the video below, and especially 7.40 to 9.03 minutes, which you can read in the extract below:

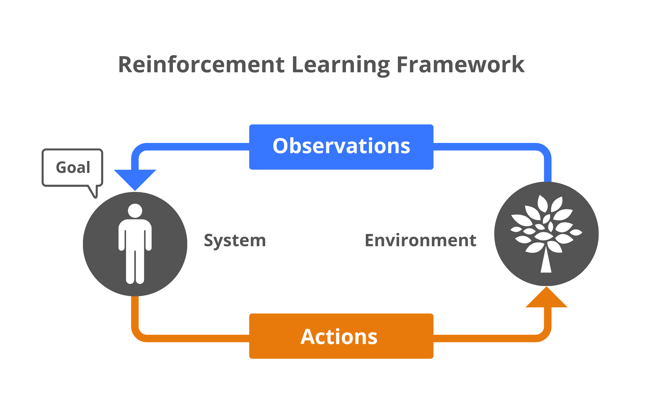

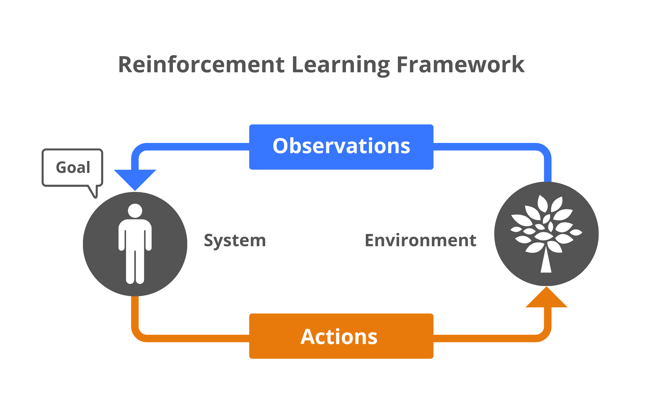

Demis Hassabis says, “It's a very simple framework to describe, and I'm just going to describe it with this simple diagram. So on the left-hand side here you have the system itself, the AI system and the AI system finds itself in some kind of environment that it's trying to achieve a goal in, and that environment could be real world or virtual. Now the system only interacts with the environment in two ways. So firstly it gets observations about the environment through its sensory apparatus. We normally use vision at DeepMind, but you could use other modalities and these observations are always noisy and incomplete. So unlike the game of chess, the real world is actually very noisy and messy, and you never have full information about what's going on. The job of the system is to build the best model of the world out there, statistical model of the world out there based on these noisy observations and once it has that model of the world, the second job of the system is to pick the best action that will get it closest towards its goal from the set of actions that are available to it at that moment in time. Once the system has decided which action that is, it outputs that action, that action gets executed it may or may not make some change to the environment, and that drives a new observation. This whole system although it's very simply described in this diagram, it has lots and lots of hidden complexities. If we could solve everything behind this diagram, that would be enough for intelligence.”

Now, I am going to introduce a word into this article that will make your toes curl...manipulation.

Check out this video around the 33 mins mark:

Stafford Beer says “...control in a regulatory sense about how you were steering isn't about bullying anybody; isn't about knowing the machinery of doing it. It is a question of having something that you want to achieve and finding ways that work of achieving it. Manipulating a system, if you will, so that it produces the desired result.”

The reason I am bringing the concepts of Requisite Variety and ‘Manipulation’ into this article as ‘qualities’, is simple - it is what Ai can and will do.

There was a recent report that “Over the course of hundreds of millions of rounds of game play, the agents developed several strategies and counter-strategies.”

Source

And continues, “At first, the researchers at OpenAI believed that this was the last phase of game play, but finally, at the 380-million-game mark, two more strategies emerged. The seekers once again developed a strategy to break into the hiders’ fort by using a locked ramp to climb onto an unlocked box, then “surf” their way on top of the box to the fort and over its walls. In the final phase, the hiders once again learned to lock all the ramps and boxes in place before building their fort.”

Moving forward, putting it simply, you are going to need systems with built in Ai to compete and win the game you’re playing - especially if you are competing against companies who have Ai ‘baked into the system’.

In the meantime, maybe you can simply make the most of the thinking behind Digital Cybernetics...

Can you really ‘think like a machine’?

Leaving aside that machine’s technically don’t ‘think’, and that you cannot process the volume of data that even the most (relatively) basic machine (e.g. a scientific calculator) can, there is a role to this premise when considering Goal-Directed Systems.

If you are an operator within that system, in control of ‘moving levers’ (e.g. resourcing ‘this’ or ‘that’), then knowing ‘how to best steer’ (of course, the foundation of Cybernetics) is obviously an advantage. And the reason to understand the ‘wiring under the boards’, not at a programing level (code) but at a level of connected systems (information transfer - being one-way or two-way). When you understand how the system ‘is working’ to produce the current results, one can adjust the levels at the right time in the right place to alter the likelihood of the achievement of the goal.

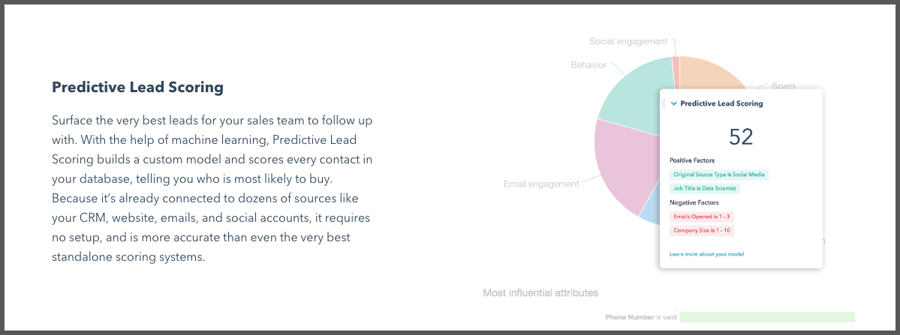

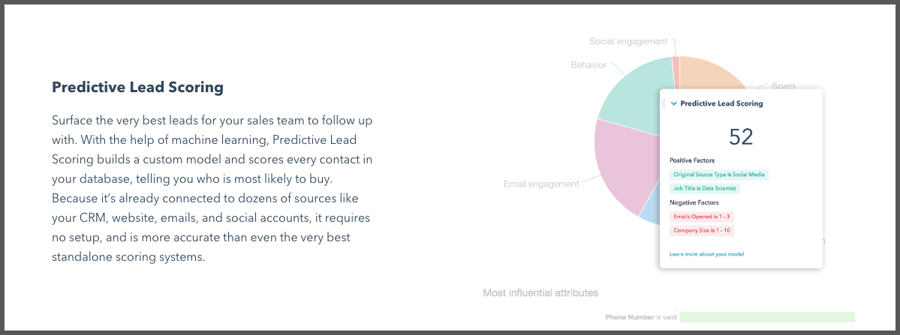

Putting it simply, if you know which leads are more likely to convert based upon the feedback from historical data of even the factor of ‘Source’, you can pay more attention e.g. elevating them in the sale process by prioritising the call.

When you add in other factors, such as consideration of engagement factors (like number of emails opened), Company size (matching your criteria), and suitable ‘Job Title’ you can allocate a Score to those leads.

This is (basically) how HubSpot Lead Scoring works:

Maybe there is a way for you to measure and track, but the reality is simple: let the machine do the thinking.

Finally...

A Goal-Directed Business system is ‘Organised’ and ‘Optimised’ with intent of achieving its inherent purpose i.e. that goal. The issue many businesses we come across as Consultants face is a lack of ‘methodology’ on implementing both aspects.

Ai will help you reinforce the learning of ‘the patterns of success’ within your organisation, but no matter how smart an Ai system you employ right now, there (almost certainly) needs to be ‘humans’ at the helm - out of necessity. ‘Goals’ at all costs, for instance, is obviously going to be detrimental: you may want to increase the temperature in your house, but you don’t want to do so by burning the floorboards. Instead, ‘ecologically sound’ goals need to be given as targets, with Ai’s own version of ‘thinking’ being brought to bear on how to reach them.

Introduction

In order to understand how to ‘work the system’, you have to understand how the system works.

A few years back, Demis Hassabis showed the progression of his Artificial Intelligence agent ‘Deepmind’ as it learned to play video games, including Atari Breakout.

You can watch for yourself here…

There is one comment that was made that I return to time and time again.

Hassabis says, the agent (Ai program) “ruthlessly exploits the weakness in the system it has found”. As you’ll see in the video, with the Ai’s goal to win, it discovers something inherently faulty within the system and exploits it.

(For comedy value, I like to think there is a ‘moment’ when then program pauses, realises what it has discovered and ‘thinks’ to itself that this is a better way to go - watch the video again and you’ll see what I mean.)

What is the ‘Goal’ of your system?

This idea of ‘Goal-directed systems’ is central to Cybernetics as “the study of goal-directed, self-regulating systems” (source). And it is inevitable that you have business goals relating to growth, and intentions in regard to revenue.

The challenge you may face though is this: how connected are people’s day to day activities to these goals?

This is not whether people are ‘aligned to the company’s culture and vision’ (as important as that may be), this is much more simple than that: does doing ‘X’, which gets ‘Y,’ get them closer to Z (the goal)?

From a non systems perspective (if such a thing could truly exist) one may ask, “what needs to change in their attitudes and behaviours in order for them to better move toward ‘Z’?”

The reality is that, with a Digital Cybernetic mindset, ‘Z’ becomes the integral purpose of the system. And if doing ‘X’ doesn’t move toward ‘Z’, then the role and function of the person performing ‘X’ doesn’t enhance the likelihood of the system performing as it is intended i.e. to achieve ‘Z’. In which case, from this perspective, how does paying that person help achieve the overarching Goal?

“The purpose of a system is what it does. There is after all, no point in claiming that the purpose of a system is to do what it constantly fails to do.”

Stafford Beer

The role of learning with an ‘Organization’ (or Organisation)

The word ‘organisation’ comes from the Greek word ‘organon’, meaning tool or instrument. So an Organisation is ‘something’ that has a purpose - i.e. this purpose is inherent within the system.

The purpose of your business is to achieve ‘a score’ - you just have to decide “what?”

To organise around this purpose becomes easier, and your target should become clearer.

The question then becomes - is there a ‘learning loop’ built into the ‘processing of the people’ within the organisation? Without feedback, there is no learning - and without learning, you are left only with luck.

Repeatable actions delivering predictable results, time and time again, will prove the model that you are running (as a business) - i.e. we do enough X, and get enough Y as results, this will get us Z within ‘Q’ space of time.

Think about it using a very simple example:

Scenario A - no email tracking software

You send an email to a prospect.

They don’t reply.

What do you do?

Scenario B - email tracking software

You send an email to a prospect.

They don’t reply.

But the tracking software indicates they’ve opened the email and ‘clicked the link’.

What do you do?

Without giving a prescribed answer to this, the reality is that the feedback you’ve received post sending the email i.e. that they’ve a) open it, and b) clicked a link, should increase the available options you have.

Not using email tracking software yet? It’s free. Opt in and I’ll send you the information.

Note: asking yourself in any situation, “what is the outcome you are seeking to achieve?” should help you focus on the available steps and stages that will lead you toward the overarching system goal.

The nature of technological system used in the Scenarios above will determine whether a person has the feedback available (opens/clicks), but whether the person who is operating that element of the system can respond appropriately (i.e. in a way likely to move them toward their contribution toward ‘the goal’) will in part be down to behavioural flexibility.

This leads us nicely into the concept of Requisite Variety.

Requisite variety

1950’s cyberneticist, W. Ross Ashbury formulate the premise that’s become known as Ashby’s Law of Requisite Variety, which states “for a system to be stable, the number of states that its control mechanism is capable of attaining (its variety) must be greater than or equal to the number of states in the system being controlled.”

As Naughton continues, “In colloquial terms Ashby’s Law has come to be understood as a simple proposition: if a system is to be able to deal successfully with the diversity of challenges that its environment produces, then it needs to have a repertoire of responses which is (at least) as nuanced as the problems thrown up by the environment.”

In terms of the concept of ‘stability’ for a growing business, think of it this way: if you cannot read the feedback from the environment as to the responses of the actions taken, then you are blind to the reality in which you are operating.

Check out 2.41 mins onward for an application, from Stafford Beer.

“....another very simple example, and far more serious, is how do finance ministers try and run the economy. You know, think about it for a minute. They try to control the money supply, if they belong to Milton Friedman. They try and work through controlling interest rates, and that's about it. Do you seriously think that has requisite variety? So how does Ashbey's law come out in that case? It comes out: whatever provisions you make in a reductionist sense in the Finance Act, for taxation purposes, you will find that the business public, with its lawyers and its accountants and so forth, has much more variety that you can put into the law.”

Why you need to think more like a machine

Take a look at this video of Virtual Cars learning to navigate a course, and eventually reach their goal - an end point:

And it is the relentless exploration, with feedback and ‘learning’ built into the system, that allows the goal to be achieved.

Taking this further, and I’m sure I’ll get corrected if I’m mixing up neural nets and deep learning as it’s not my territory, but take a look at the video below, and especially 7.40 to 9.03 minutes, which you can read in the extract below:

Demis Hassabis says, “It's a very simple framework to describe, and I'm just going to describe it with this simple diagram. So on the left-hand side here you have the system itself, the AI system and the AI system finds itself in some kind of environment that it's trying to achieve a goal in, and that environment could be real world or virtual. Now the system only interacts with the environment in two ways. So firstly it gets observations about the environment through its sensory apparatus. We normally use vision at DeepMind, but you could use other modalities and these observations are always noisy and incomplete. So unlike the game of chess, the real world is actually very noisy and messy, and you never have full information about what's going on. The job of the system is to build the best model of the world out there, statistical model of the world out there based on these noisy observations and once it has that model of the world, the second job of the system is to pick the best action that will get it closest towards its goal from the set of actions that are available to it at that moment in time. Once the system has decided which action that is, it outputs that action, that action gets executed it may or may not make some change to the environment, and that drives a new observation. This whole system although it's very simply described in this diagram, it has lots and lots of hidden complexities. If we could solve everything behind this diagram, that would be enough for intelligence.”

Now, I am going to introduce a word into this article that will make your toes curl...manipulation.

Check out this video around the 33 mins mark:

Stafford Beer says “...control in a regulatory sense about how you were steering isn't about bullying anybody; isn't about knowing the machinery of doing it. It is a question of having something that you want to achieve and finding ways that work of achieving it. Manipulating a system, if you will, so that it produces the desired result.”

The reason I am bringing the concepts of Requisite Variety and ‘Manipulation’ into this article as ‘qualities’, is simple - it is what Ai can and will do.

There was a recent report that “Over the course of hundreds of millions of rounds of game play, the agents developed several strategies and counter-strategies.”

Source

And continues, “At first, the researchers at OpenAI believed that this was the last phase of game play, but finally, at the 380-million-game mark, two more strategies emerged. The seekers once again developed a strategy to break into the hiders’ fort by using a locked ramp to climb onto an unlocked box, then “surf” their way on top of the box to the fort and over its walls. In the final phase, the hiders once again learned to lock all the ramps and boxes in place before building their fort.”

Moving forward, putting it simply, you are going to need systems with built in Ai to compete and win the game you’re playing - especially if you are competing against companies who have Ai ‘baked into the system’.

In the meantime, maybe you can simply make the most of the thinking behind Digital Cybernetics...

Can you really ‘think like a machine’?

Leaving aside that machine’s technically don’t ‘think’, and that you cannot process the volume of data that even the most (relatively) basic machine (e.g. a scientific calculator) can, there is a role to this premise when considering Goal-Directed Systems.

If you are an operator within that system, in control of ‘moving levers’ (e.g. resourcing ‘this’ or ‘that’), then knowing ‘how to best steer’ (of course, the foundation of Cybernetics) is obviously an advantage. And the reason to understand the ‘wiring under the boards’, not at a programing level (code) but at a level of connected systems (information transfer - being one-way or two-way). When you understand how the system ‘is working’ to produce the current results, one can adjust the levels at the right time in the right place to alter the likelihood of the achievement of the goal.

Putting it simply, if you know which leads are more likely to convert based upon the feedback from historical data of even the factor of ‘Source’, you can pay more attention e.g. elevating them in the sale process by prioritising the call.

When you add in other factors, such as consideration of engagement factors (like number of emails opened), Company size (matching your criteria), and suitable ‘Job Title’ you can allocate a Score to those leads.

This is (basically) how HubSpot Lead Scoring works:

Maybe there is a way for you to measure and track, but the reality is simple: let the machine do the thinking.

Finally...

A Goal-Directed Business system is ‘Organised’ and ‘Optimised’ with intent of achieving its inherent purpose i.e. that goal. The issue many businesses we come across as Consultants face is a lack of ‘methodology’ on implementing both aspects.

Ai will help you reinforce the learning of ‘the patterns of success’ within your organisation, but no matter how smart an Ai system you employ right now, there (almost certainly) needs to be ‘humans’ at the helm - out of necessity. ‘Goals’ at all costs, for instance, is obviously going to be detrimental: you may want to increase the temperature in your house, but you don’t want to do so by burning the floorboards. Instead, ‘ecologically sound’ goals need to be given as targets, with Ai’s own version of ‘thinking’ being brought to bear on how to reach them.

.svg)

.svg)